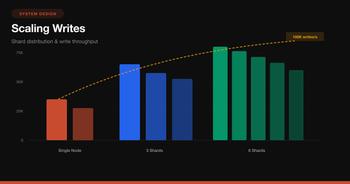

Every distributed system eventually faces the same problem: you have N servers and millions of keys, and you need to decide which server owns which key. The naive approach — server = hash(key) % N — works until you add or remove a server. When N changes, almost every key maps to a different server. Your caches go cold. Your data moves. Your system melts.

Consistent hashing solves this by ensuring that when the number of servers changes, only a minimal fraction of keys need to move.

The Problem with Modular Hashing

Let’s start with why hash(key) % N breaks down. Say you have 3 servers and 12 keys:

# With 3 servers

for key in range(12):

server = key % 3

print(f"key={key} → server {server}")

# key=0 → server 0 key=4 → server 1 key=8 → server 2

# key=1 → server 1 key=5 → server 2 key=9 → server 0

# key=2 → server 2 key=6 → server 0 key=10 → server 1

# key=3 → server 0 key=7 → server 1 key=11 → server 2Now add a 4th server. N changes from 3 to 4:

# With 4 servers — almost everything moved

for key in range(12):

old = key % 3

new = key % 4

moved = "MOVED" if old != new else "same"

print(f"key={key}: server {old} → server {new} {moved}")

# key=0: 0 → 0 same

# key=1: 1 → 1 same

# key=2: 2 → 2 same

# key=3: 0 → 3 MOVED ← was server 0, now server 3

# key=4: 1 → 0 MOVED

# key=5: 2 → 1 MOVED

# key=6: 0 → 2 MOVED

# key=7: 1 → 3 MOVED

# key=8: 2 → 0 MOVED

# key=9: 0 → 1 MOVED

# key=10: 1 → 2 MOVED

# key=11: 2 → 3 MOVED9 out of 12 keys moved — 75%. In a production cache cluster, that means 75% cache miss rate immediately after scaling. Your database gets hammered. Latency spikes. Alerts fire.

How Consistent Hashing Works

The core idea: map both keys and servers onto the same circular hash space (a ring), then assign each key to the nearest server going clockwise.

The Algorithm

- Create a ring of size 2³² (or any large hash space)

- Hash each server to a position on the ring:

position = hash(server_id) - Hash each key to a position on the ring:

position = hash(key) - Walk clockwise from the key’s position until you hit a server — that server owns the key

When a server is added, it only takes keys from its immediate clockwise neighbor. When a server is removed, its keys only move to the next server clockwise. Everything else stays put.

Implementation

Here’s a clean implementation in Python:

import hashlib

from bisect import bisect_right

class ConsistentHash:

def __init__(self, nodes=None, vnodes=150):

self.vnodes = vnodes

self.ring = {} # hash_value → node

self.sorted_keys = [] # sorted list of hash values on the ring

if nodes:

for node in nodes:

self.add_node(node)

def _hash(self, key: str) -> int:

"""Hash a key to a position on the ring (0 to 2^32 - 1)."""

digest = hashlib.md5(key.encode()).hexdigest()

return int(digest[:8], 16)

def add_node(self, node: str):

"""Add a physical node with its virtual nodes to the ring."""

for i in range(self.vnodes):

vnode_key = f"{node}:vnode{i}"

hash_val = self._hash(vnode_key)

self.ring[hash_val] = node

self.sorted_keys.append(hash_val)

self.sorted_keys.sort()

def remove_node(self, node: str):

"""Remove a node and all its virtual nodes from the ring."""

for i in range(self.vnodes):

vnode_key = f"{node}:vnode{i}"

hash_val = self._hash(vnode_key)

del self.ring[hash_val]

self.sorted_keys.remove(hash_val)

def get_node(self, key: str) -> str:

"""Find which node owns this key."""

if not self.sorted_keys:

raise Exception("No nodes in the ring")

hash_val = self._hash(key)

# Find the first node position >= key's hash (clockwise walk)

idx = bisect_right(self.sorted_keys, hash_val)

# Wrap around to the first node if we've gone past the end

if idx == len(self.sorted_keys):

idx = 0

return self.ring[self.sorted_keys[idx]]Usage:

ch = ConsistentHash(["server-A", "server-B", "server-C"])

# Map some keys

for key in ["user:1001", "user:1002", "session:abc", "cache:homepage"]:

print(f"{key} → {ch.get_node(key)}")

# Add a new server — only ~1/N keys will move

ch.add_node("server-D")

# Check which keys moved

for key in ["user:1001", "user:1002", "session:abc", "cache:homepage"]:

print(f"{key} → {ch.get_node(key)}")And in JavaScript/TypeScript:

const crypto = require('crypto');

class ConsistentHash {

constructor(nodes = [], vnodes = 150) {

this.vnodes = vnodes;

this.ring = new Map(); // hash → node

this.sortedKeys = []; // sorted hash positions

for (const node of nodes) {

this.addNode(node);

}

}

_hash(key) {

return parseInt(

crypto.createHash('md5').update(key).digest('hex').slice(0, 8),

16

);

}

addNode(node) {

for (let i = 0; i < this.vnodes; i++) {

const hash = this._hash(`${node}:vnode${i}`);

this.ring.set(hash, node);

this.sortedKeys.push(hash);

}

this.sortedKeys.sort((a, b) => a - b);

}

removeNode(node) {

for (let i = 0; i < this.vnodes; i++) {

const hash = this._hash(`${node}:vnode${i}`);

this.ring.delete(hash);

}

this.sortedKeys = this.sortedKeys.filter(k => this.ring.has(k));

}

getNode(key) {

if (this.sortedKeys.length === 0) throw new Error('Empty ring');

const hash = this._hash(key);

// Binary search for the first position >= hash

let lo = 0, hi = this.sortedKeys.length;

while (lo < hi) {

const mid = (lo + hi) >>> 1;

if (this.sortedKeys[mid] < hash) lo = mid + 1;

else hi = mid;

}

// Wrap around

const idx = lo === this.sortedKeys.length ? 0 : lo;

return this.ring.get(this.sortedKeys[idx]);

}

}Why Keys Barely Move

This is the key insight. Let’s say you have 4 servers (A, B, C, D) on the ring. Each server owns the arc from the previous server to itself (going clockwise).

When you add server E between C and D:

- E takes ownership of the arc between C and E

- Only keys in that specific arc move from D to E

- Keys owned by A, B, and C are completely unaffected

- Roughly 1/N keys move (where N is the number of servers)

When you remove server B:

- B’s keys move to the next server clockwise (C)

- Only B’s keys move — everything else stays

- Again, roughly 1/N keys move

Compare this with modular hashing where (N-1)/N keys move — that’s 75% for 3→4 servers versus ~25% for consistent hashing.

The Virtual Node Solution

There’s a flaw in basic consistent hashing: with only a few servers, the ring positions might cluster together, giving one server far more keys than others. With 3 servers, one might end up with 60% of the keys while another gets 10%.

Virtual nodes (vnodes) fix this by mapping each physical server to many positions on the ring.

Instead of hashing “server-A” once, you hash “server-A:vnode0”, “server-A:vnode1”, …, “server-A:vnode149”. Each physical server gets 100-200 virtual positions scattered around the ring. The result is near-uniform distribution.

How Many Virtual Nodes?

The number of vnodes is a trade-off:

| Vnodes per server | Load std deviation | Memory per server | Lookup time |

|---|---|---|---|

| 1 | Very high (~50%) | Minimal | O(log N) |

| 10 | ~20% | Low | O(log 10N) |

| 100 | ~10% | Moderate | O(log 100N) |

| 150 | ~5-7% | Moderate | O(log 150N) |

| 500 | ~2-3% | Higher | O(log 500N) |

150 vnodes per server is the common sweet spot — good enough balance without excessive memory. Cassandra uses 256 vnodes by default.

Weighted Nodes

Virtual nodes also let you assign more capacity to beefier servers. A server with 2x the RAM gets 2x the vnodes:

class WeightedConsistentHash(ConsistentHash):

def add_node(self, node: str, weight: int = 1):

"""Add a node with a weight multiplier for vnodes."""

effective_vnodes = self.vnodes * weight

for i in range(effective_vnodes):

vnode_key = f"{node}:vnode{i}"

hash_val = self._hash(vnode_key)

self.ring[hash_val] = node

self.sorted_keys.append(hash_val)

self.sorted_keys.sort()

# Large server gets 2x the keys

ch = WeightedConsistentHash()

ch.add_node("small-server-1", weight=1) # 150 vnodes

ch.add_node("small-server-2", weight=1) # 150 vnodes

ch.add_node("large-server-3", weight=2) # 300 vnodes → ~2x keysReplication with Consistent Hashing

In production, you don’t just assign a key to one server. You replicate it to multiple servers for fault tolerance. The pattern: assign the key to the first N distinct physical servers going clockwise.

def get_replicas(self, key: str, replica_count: int = 3) -> list:

"""Get N distinct physical servers for replication."""

if not self.sorted_keys:

raise Exception("No nodes in the ring")

hash_val = self._hash(key)

idx = bisect_right(self.sorted_keys, hash_val)

replicas = []

seen = set()

for i in range(len(self.sorted_keys)):

pos = (idx + i) % len(self.sorted_keys)

node = self.ring[self.sorted_keys[pos]]

if node not in seen:

replicas.append(node)

seen.add(node)

if len(replicas) == replica_count:

break

return replicasImportant: Skip virtual nodes of the same physical server. If server-A has vnodes at positions 100, 105, and 110, you don’t want all 3 replicas on server-A. Walk until you find replica_count distinct physical servers.

Rack-Aware Replication

DynamoDB and Cassandra take this further — replicas must be in different failure domains (racks, availability zones):

def get_rack_aware_replicas(self, key, replica_count=3):

hash_val = self._hash(key)

idx = bisect_right(self.sorted_keys, hash_val)

replicas = []

seen_nodes = set()

seen_racks = set()

for i in range(len(self.sorted_keys)):

pos = (idx + i) % len(self.sorted_keys)

node = self.ring[self.sorted_keys[pos]]

rack = self.node_to_rack[node]

# Skip if same physical node or same rack (if we have alternatives)

if node in seen_nodes:

continue

if rack in seen_racks and len(seen_racks) < self.total_racks:

continue

replicas.append(node)

seen_nodes.add(node)

seen_racks.add(rack)

if len(replicas) == replica_count:

break

return replicasReal-World Usage

Amazon DynamoDB

DynamoDB uses consistent hashing as its core partitioning strategy (described in the original Dynamo paper). Each table’s partition key is hashed to determine which storage node owns it.

Key design choices:

- Preference list: Each key has a list of N nodes responsible for it (typically 3)

- Sloppy quorum: Writes go to the first N healthy nodes on the ring — if a node is down, the next node temporarily takes over (hinted handoff)

- Vector clocks for conflict resolution when replicas diverge

Apache Cassandra

Cassandra uses consistent hashing with vnodes for data distribution across the cluster:

# cassandra.yaml

num_tokens: 256 # Number of vnodes per node

partitioner: org.apache.cassandra.dht.Murmur3PartitionerWhen you run nodetool status, you see each node’s token range ownership:

Datacenter: dc1

===============

Status Address Load Tokens Owns

UN 10.0.0.1 256 GB 256 33.2%

UN 10.0.0.2 248 GB 256 33.5%

UN 10.0.0.3 252 GB 256 33.3%The near-equal “Owns” percentages are thanks to vnodes distributing tokens evenly.

Redis Cluster

Redis Cluster uses a fixed-size variant — 16,384 hash slots instead of a continuous ring:

# Each key maps to a slot

CLUSTER KEYSLOT "user:1001"

# Returns: 5474

# Slots are assigned to nodes

# Node A: slots 0-5460

# Node B: slots 5461-10922

# Node C: slots 10923-16383The hash slot approach simplifies the protocol — nodes exchange a 16K bitmap of slot assignments instead of maintaining a full ring.

def redis_slot(key: str) -> int:

"""Redis uses CRC16 mod 16384 for slot assignment."""

# Handle hash tags: {user}.name → hash on "user" only

start = key.find('{')

if start != -1:

end = key.find('}', start + 1)

if end != -1 and end != start + 1:

key = key[start + 1:end]

crc = crc16(key.encode())

return crc % 16384Redis also supports hash tags — {user:1001}.profile and {user:1001}.settings hash to the same slot, ensuring they live on the same node. This enables multi-key operations.

Jump Consistent Hashing

Google’s Jump Consistent Hash is an alternative that’s faster and perfectly balanced — but only works when servers are numbered sequentially (no arbitrary additions/removals):

def jump_hash(key: int, num_buckets: int) -> int:

"""Google's Jump Consistent Hash — O(ln n), zero memory."""

b, j = -1, 0

while j < num_buckets:

b = j

key = ((key * 2862933555777941757) + 1) & 0xFFFFFFFFFFFFFFFF

j = int((b + 1) * (1 << 31) / ((key >> 33) + 1))

return bProperties:

- O(ln n) time, zero memory — no ring to maintain

- Perfectly balanced — each bucket gets exactly 1/N keys

- Minimal movement — only 1/N keys move when adding a bucket

- Limitation: Only supports adding/removing at the end (no arbitrary node removal)

Use Jump Hash for stateless load balancing where servers are numbered 0 to N-1. Use ring-based consistent hashing when servers can join and leave arbitrarily.

Handling Hotspots

Even with consistent hashing and vnodes, hotspots can occur when certain keys get disproportionate traffic (e.g., a celebrity’s profile, a viral post).

Bounded Load Consistent Hashing

Google’s approach: set a maximum load threshold per server. If the target server is over capacity, walk to the next server on the ring:

def get_node_bounded(self, key: str, max_load_factor: float = 1.25):

"""Route to the next server with capacity, not just the first."""

hash_val = self._hash(key)

idx = bisect_right(self.sorted_keys, hash_val)

avg_load = self.total_keys / len(self.physical_nodes)

max_load = avg_load * max_load_factor

for i in range(len(self.sorted_keys)):

pos = (idx + i) % len(self.sorted_keys)

node = self.ring[self.sorted_keys[pos]]

if self.node_loads[node] < max_load:

self.node_loads[node] += 1

return node

# Fallback: all nodes at capacity, use the original target

return self.ring[self.sorted_keys[idx % len(self.sorted_keys)]]This guarantees no server gets more than 1.25x the average load, at the cost of slightly more key movement during rebalancing.

Performance Characteristics

| Operation | Time Complexity | Notes |

|---|---|---|

| Lookup key | O(log V×N) | Binary search on sorted ring (V=vnodes, N=nodes) |

| Add node | O(V log V×N) | Insert V vnode positions + re-sort |

| Remove node | O(V log V×N) | Remove V positions |

| Keys moved on add | O(K/N) | K=total keys, N=total nodes |

| Keys moved on remove | O(K/N) | Same — minimal disruption |

| Memory | O(V×N) | Ring stores V positions per node |

For a cluster of 100 servers with 150 vnodes each, the ring has 15,000 entries. A binary search over 15,000 entries is ~14 comparisons — essentially instant.

When to Use Consistent Hashing

| Scenario | Use consistent hashing? |

|---|---|

| Distributed cache (Memcached, Redis) | Yes — minimizes cache invalidation on scaling |

| Database sharding | Yes — Cassandra, DynamoDB, CockroachDB all use it |

| Load balancing (sticky sessions) | Yes — route same user to same backend |

| CDN edge routing | Yes — map content to nearest edge node |

| Single database, no sharding | No — just use primary key indexing |

| Static cluster, never changes | No — simple modular hash is fine |

Summary

Consistent hashing is the foundational algorithm behind distributed data systems:

- The ring maps keys and servers to the same hash space — keys walk clockwise to find their server

- Minimal movement: adding/removing a server only remaps ~1/N keys instead of ~100%

- Virtual nodes fix load imbalance by giving each server 100-200 positions on the ring

- Replication walks the ring to find N distinct physical servers (rack-aware for fault tolerance)

- Production systems like DynamoDB, Cassandra, and Redis Cluster all build on this primitive

The algorithm is simple — a hash function, a sorted array, and a binary search. But it’s the reason your distributed cache doesn’t collapse every time you add a server.