“There are only two hard things in Computer Science: cache invalidation and naming things.” — Phil Karlton

This quote has been repeated so often it’s become a cliche. But the reason it persists is that it’s true — caching is easy to add and brutally hard to get right. A naive cache saves you from a slow database. A well-designed caching strategy saves you from building a bigger database at all.

This article covers caching from first principles: why we cache, where caches live, how to invalidate them, what to do when things go wrong, and the production patterns used by systems serving millions of requests per second.

Why Cache? The Latency Gap

The fundamental reason for caching is the latency gap between memory and everything else:

| Storage | Latency | Relative Speed |

|---|---|---|

| L1 CPU cache | 0.5 ns | 1x |

| L2 CPU cache | 7 ns | 14x |

| RAM | 100 ns | 200x |

| Redis (network) | 500,000 ns (0.5 ms) | 1,000,000x |

| SSD read | 150,000 ns | 300,000x |

| Database query | 5-100 ms | 10M-200Mx |

| Cross-region API | 50-150 ms | 100M-300Mx |

A PostgreSQL query takes 5-100 milliseconds. Redis returns in 0.5 milliseconds. That’s a 10-200x speedup — and when you’re handling 50,000 requests per second, that difference is the difference between 3 servers and 300.

The Caching Layers

Every request passes through multiple potential cache layers. Each one that returns a hit prevents the request from going deeper:

Layer 0: Browser Cache

The fastest cache hit is one that never leaves the client. HTTP cache headers control this:

# Cache for 1 hour, revalidate with ETag after

Cache-Control: max-age=3600, must-revalidate

ETag: "abc123"

# Immutable assets (fingerprinted filenames)

Cache-Control: max-age=31536000, immutable

# /static/app.a1b2c3d4.js — filename changes on content change

# Private, user-specific content

Cache-Control: private, max-age=60Conditional requests avoid re-downloading unchanged resources:

# Client sends:

GET /api/users/42

If-None-Match: "abc123"

# Server responds (if unchanged):

304 Not Modified

# Body: empty — client uses cached versionThis alone can eliminate 60-80% of static asset requests.

Layer 1: CDN Edge Cache

CDNs cache responses at 200+ points of presence worldwide. A user in Tokyo hits the Tokyo edge node instead of your US-East server:

// CloudFront behavior: cache API responses for 5 minutes

// Set via Cache-Control header from your origin:

res.set('Cache-Control', 'public, max-age=300, s-maxage=300');

// s-maxage applies to shared caches (CDN) only

// Vary header: different cache per header value

res.set('Vary', 'Accept-Language, Authorization');

// CDN caches separate responses for each language + userWhen to use CDN caching:

- Static assets (always — with fingerprinted filenames and long max-age)

- Public API responses that change infrequently (product catalogs, blog content)

- GraphQL responses (requires cache key on query body, not just URL)

When NOT to use CDN caching:

- User-specific data (unless using

Vary: Authorizationwith care) - Real-time data (stock prices, live scores)

- POST/PUT/DELETE responses

Layer 2: Reverse Proxy Cache

Nginx or Varnish sits in front of your application servers, caching full HTTP responses:

# Nginx proxy cache configuration

proxy_cache_path /var/cache/nginx levels=1:2

keys_zone=api_cache:100m

max_size=10g

inactive=60m;

server {

location /api/ {

proxy_cache api_cache;

proxy_cache_valid 200 5m; # Cache 200s for 5 min

proxy_cache_valid 404 1m; # Cache 404s for 1 min

proxy_cache_key "$request_uri|$arg_page";

proxy_cache_use_stale error timeout http_500;

# Serve stale cache if origin is down

add_header X-Cache-Status $upstream_cache_status;

# HIT, MISS, STALE, BYPASS — invaluable for debugging

}

}Layer 3: Application Cache (Redis / Memcached)

This is where most of the caching magic happens. Your application code reads from a distributed cache before hitting the database:

graph LR

A[App Server 1] --> R[(Redis Cluster)]

B[App Server 2] --> R

C[App Server 3] --> R

R -.->|MISS| D[(PostgreSQL)]

style A fill:#2563eb,stroke:#1d4ed8,color:#fff

style B fill:#2563eb,stroke:#1d4ed8,color:#fff

style C fill:#2563eb,stroke:#1d4ed8,color:#fff

style R fill:#c84b2f,stroke:#991b1b,color:#fff

style D fill:#059669,stroke:#047857,color:#fffRedis vs Memcached:

| Feature | Redis | Memcached |

|---|---|---|

| Data structures | Strings, hashes, lists, sets, sorted sets, streams | Strings only |

| Persistence | RDB snapshots + AOF | None |

| Clustering | Redis Cluster (built-in) | Client-side sharding |

| Lua scripting | Yes (atomic operations) | No |

| Memory efficiency | Moderate (metadata overhead) | Better (slab allocator) |

| Pub/Sub | Yes | No |

| Best for | Most use cases | Simple key-value, maximum throughput |

Redis wins for almost everything unless you need pure key-value at maximum throughput with minimal memory overhead.

Layer 4: Database Cache

Databases have their own caching layers that most developers forget about:

-- PostgreSQL: shared_buffers (in-memory page cache)

-- Default is only 128MB — set to 25% of RAM

ALTER SYSTEM SET shared_buffers = '4GB';

-- PostgreSQL: effective_cache_size (tells query planner how much OS cache to expect)

ALTER SYSTEM SET effective_cache_size = '12GB';

-- MySQL: InnoDB buffer pool (caches data + indexes)

SET GLOBAL innodb_buffer_pool_size = 8589934592; -- 8GBMaterialized views are database-level caching of expensive queries:

CREATE MATERIALIZED VIEW product_stats AS

SELECT

p.id,

p.name,

COUNT(r.id) AS review_count,

AVG(r.rating) AS avg_rating,

SUM(oi.quantity) AS total_sold

FROM products p

LEFT JOIN reviews r ON r.product_id = p.id

LEFT JOIN order_items oi ON oi.product_id = p.id

GROUP BY p.id, p.name;

-- Refresh periodically (not on every write)

REFRESH MATERIALIZED VIEW CONCURRENTLY product_stats;Cache Invalidation Strategies

This is the hard part. You have four main patterns, each with different consistency and complexity tradeoffs:

Cache-Aside (Lazy Loading)

The application manages the cache explicitly. This is the most common pattern:

async function getUser(userId) {

// 1. Check cache

const cached = await redis.get(`user:${userId}`);

if (cached) {

return JSON.parse(cached);

}

// 2. Cache miss — query DB

const user = await db.query('SELECT * FROM users WHERE id = $1', [userId]);

// 3. Populate cache with TTL

await redis.set(`user:${userId}`, JSON.stringify(user), 'EX', 300);

return user;

}

async function updateUser(userId, data) {

// 1. Update DB (source of truth)

await db.query('UPDATE users SET name = $1 WHERE id = $2', [data.name, userId]);

// 2. Invalidate cache (NOT update — avoids race conditions)

await redis.del(`user:${userId}`);

// Next read will repopulate from DB

}Why delete instead of update? Because between the DB write and cache write, another thread might read stale data from the DB and write it to cache, permanently storing stale data. Delete-then-repopulate avoids this race.

Write-Through

Every write goes to both cache and database synchronously:

async function updateUser(userId, data) {

// Write to DB

const user = await db.query(

'UPDATE users SET name = $1 WHERE id = $2 RETURNING *',

[data.name, userId]

);

// Write to cache (same transaction boundary)

await redis.set(`user:${userId}`, JSON.stringify(user), 'EX', 300);

return user;

}Use this when read-after-write consistency is critical (e.g., user updates their profile and immediately sees the change).

Write-Behind (Write-Back)

Writes go to cache only; the cache asynchronously flushes to the database:

async function incrementViewCount(postId) {

// Write only to Redis — instant response

await redis.incr(`views:${postId}`);

}

// Background job: flush to DB every 30 seconds

async function flushViewCounts() {

const keys = await redis.keys('views:*');

for (const key of keys) {

const postId = key.split(':')[1];

const count = await redis.getdel(key); // atomic get + delete

if (count) {

await db.query(

'UPDATE posts SET view_count = view_count + $1 WHERE id = $2',

[parseInt(count), postId]

);

}

}

}This is how analytics counters, view counts, and rate limiters work. The tradeoff is clear: if Redis crashes before flushing, those writes are lost.

Event-Driven Invalidation

Writes publish events; cache consumers listen and invalidate:

// Writer service

async function updateProduct(productId, data) {

await db.query('UPDATE products SET price = $1 WHERE id = $2', [data.price, productId]);

// Publish event — writer doesn't know about caches

await kafka.send({

topic: 'product.updated',

messages: [{ key: productId, value: JSON.stringify({ id: productId, ...data }) }]

});

}

// Cache invalidation consumer

kafka.subscribe('product.updated', async (message) => {

const { id } = JSON.parse(message.value);

// Invalidate all caches that hold this product

await redis.del(`product:${id}`);

await redis.del(`product-page:${id}`);

await redis.del(`category:${getCategoryId(id)}`); // related cache keys

// Purge CDN cache

await cloudfront.createInvalidation({ paths: [`/api/products/${id}`] });

});This is the pattern for multi-layer invalidation — when a single data change needs to invalidate caches at multiple levels (Redis, CDN, reverse proxy, search index).

Eviction Policies

When cache memory is full, something has to go. The eviction policy determines what:

Configuring Redis Eviction

# Set max memory

CONFIG SET maxmemory 4gb

# Set eviction policy

CONFIG SET maxmemory-policy allkeys-lfu

# Monitor evicted keys

INFO stats | grep evicted_keysTTL Best Practices

Setting the right TTL is about matching your staleness tolerance to your data’s change frequency:

const TTL = {

// Static config (changes on deploy) — long TTL

featureFlags: 3600, // 1 hour

// User profiles (changes occasionally) — moderate TTL

userProfile: 300, // 5 minutes

// Product prices (changes frequently) — short TTL

productPrice: 60, // 1 minute

// Session data (must be fresh) — very short TTL + event invalidation

cartContents: 30, // 30 seconds

// Computed aggregates (expensive to regenerate) — long TTL + manual refresh

dashboardStats: 900, // 15 minutes

};

// ALWAYS add jitter to prevent thundering herd

function ttlWithJitter(baseTTL) {

const jitter = Math.floor(Math.random() * baseTTL * 0.1); // ±10%

return baseTTL + jitter;

}

await redis.set(key, value, 'EX', ttlWithJitter(TTL.userProfile));Common Pitfalls and Solutions

These are the problems that don’t show up in development but destroy you in production:

1. Thundering Herd

When a popular cache key expires, hundreds of requests simultaneously miss the cache and slam the database:

async function getUserWithLock(userId) {

const cacheKey = `user:${userId}`;

const lockKey = `lock:${cacheKey}`;

// Try cache first

let cached = await redis.get(cacheKey);

if (cached) return JSON.parse(cached);

// Try to acquire lock (only one winner)

const acquired = await redis.set(lockKey, '1', 'NX', 'EX', 5);

if (acquired) {

// Winner: fetch from DB and populate cache

const user = await db.query('SELECT * FROM users WHERE id = $1', [userId]);

await redis.set(cacheKey, JSON.stringify(user), 'EX', ttlWithJitter(300));

await redis.del(lockKey);

return user;

}

// Losers: wait briefly then retry (cache should be populated by winner)

await sleep(50);

cached = await redis.get(cacheKey);

if (cached) return JSON.parse(cached);

// Fallback: query DB directly (lock holder might have failed)

return db.query('SELECT * FROM users WHERE id = $1', [userId]);

}An even better approach is stale-while-revalidate: keep serving the stale cached value while one thread refreshes it in the background.

2. Cache Penetration

Requests for non-existent keys always miss the cache and hit the database:

async function getUserSafe(userId) {

const cacheKey = `user:${userId}`;

const cached = await redis.get(cacheKey);

if (cached === 'NULL_SENTINEL') return null; // Cached negative result

if (cached) return JSON.parse(cached);

const user = await db.query('SELECT * FROM users WHERE id = $1', [userId]);

if (!user) {

// Cache the miss with short TTL

await redis.set(cacheKey, 'NULL_SENTINEL', 'EX', 60);

return null;

}

await redis.set(cacheKey, JSON.stringify(user), 'EX', 300);

return user;

}For large keyspaces, use a Bloom filter as a pre-check:

const { BloomFilter } = require('bloom-filters');

const filter = new BloomFilter(1000000, 0.01); // 1M items, 1% false positive

// On startup: populate from DB

const allIds = await db.query('SELECT id FROM users');

allIds.forEach(row => filter.add(String(row.id)));

// On every request: check Bloom filter first

async function getUser(userId) {

if (!filter.has(String(userId))) {

return null; // Definitely doesn't exist — skip DB entirely

}

// Might exist — proceed to cache/DB lookup

return getUserSafe(userId);

}3. Hot Key Problem

One key receives disproportionate traffic, overloading a single Redis shard:

// Solution 1: Key replication across shards

function getShardedKey(key) {

const replicas = 5;

const shard = Math.floor(Math.random() * replicas);

return `${key}:shard:${shard}`;

}

// On write: update all replicas

async function setHotKey(key, value, ttl) {

const replicas = 5;

const pipeline = redis.pipeline();

for (let i = 0; i < replicas; i++) {

pipeline.set(`${key}:shard:${i}`, value, 'EX', ttl);

}

await pipeline.exec();

}

// On read: pick random replica

async function getHotKey(key) {

return redis.get(getShardedKey(key));

}// Solution 2: L1 in-process cache (Node.js example)

const NodeCache = require('node-cache');

const localCache = new NodeCache({ stdTTL: 5, checkperiod: 2 });

async function getWithLocalCache(key) {

// L1: in-process (0ms, per-server)

const local = localCache.get(key);

if (local) return local;

// L2: Redis (0.5ms, shared)

const remote = await redis.get(key);

if (remote) {

const parsed = JSON.parse(remote);

localCache.set(key, parsed); // promote to L1

return parsed;

}

// L3: Database

const value = await fetchFromDB(key);

await redis.set(key, JSON.stringify(value), 'EX', 300);

localCache.set(key, value);

return value;

}4. Cache Warming

A cold cache after deployment or failover means every request hits the database simultaneously:

// Pre-warm cache on deployment

async function warmCache() {

// Get the most frequently accessed keys from analytics

const hotKeys = await db.query(`

SELECT user_id, COUNT(*) as freq

FROM access_logs

WHERE timestamp > NOW() - INTERVAL '1 hour'

GROUP BY user_id

ORDER BY freq DESC

LIMIT 10000

`);

// Batch load into Redis

const pipeline = redis.pipeline();

for (const row of hotKeys) {

const user = await db.query('SELECT * FROM users WHERE id = $1', [row.user_id]);

pipeline.set(`user:${row.user_id}`, JSON.stringify(user), 'EX', 300);

}

await pipeline.exec();

console.log(`Warmed ${hotKeys.length} cache entries`);

}Cache Key Design

Bad cache keys cause collisions, waste memory, and make invalidation impossible. Good cache keys are structured and predictable:

// Bad: ambiguous, no versioning

const key = `user_42`;

const key = `data_${id}`;

// Good: namespaced, versioned, structured

const key = `v2:user:${userId}`;

const key = `v2:user:${userId}:orders:page:${page}`;

const key = `v2:product:${productId}:reviews:sort:${sortBy}`;

// Pattern: {version}:{entity}:{id}:{sub-resource}:{params}

function cacheKey(entity, id, options = {}) {

const version = 'v2';

const base = `${version}:${entity}:${id}`;

const suffix = Object.entries(options)

.sort(([a], [b]) => a.localeCompare(b)) // deterministic order

.map(([k, v]) => `${k}:${v}`)

.join(':');

return suffix ? `${base}:${suffix}` : base;

}

cacheKey('user', 42); // "v2:user:42"

cacheKey('user', 42, { page: 3, sort: 'name' }); // "v2:user:42:page:3:sort:name"Wildcard Invalidation

When a user updates their profile, you need to invalidate ALL keys related to that user:

async function invalidateUser(userId) {

// Option 1: Scan for matching keys (slow, don't use in hot path)

// const keys = await redis.keys(`v2:user:${userId}:*`);

// Option 2: Track related keys in a set

const relatedKeys = await redis.smembers(`v2:user:${userId}:_keys`);

if (relatedKeys.length > 0) {

await redis.del(...relatedKeys);

await redis.del(`v2:user:${userId}:_keys`);

}

// Option 3: Version-based invalidation (increment version, old keys become orphans)

await redis.incr(`v2:user:${userId}:_version`);

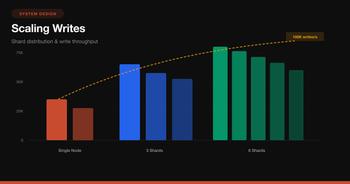

}Redis Cluster: Scaling the Cache

A single Redis node handles ~100K operations per second. When you need more:

graph TD

subgraph "Redis Cluster (6 nodes)"

M1[Master 1<br/>Slots 0-5460] --> R1[Replica 1]

M2[Master 2<br/>Slots 5461-10922] --> R2[Replica 2]

M3[Master 3<br/>Slots 10923-16383] --> R3[Replica 3]

end

C[Client] --> M1

C --> M2

C --> M3

style M1 fill:#c84b2f,stroke:#991b1b,color:#fff

style M2 fill:#c84b2f,stroke:#991b1b,color:#fff

style M3 fill:#c84b2f,stroke:#991b1b,color:#fff

style R1 fill:#2563eb,stroke:#1d4ed8,color:#fff

style R2 fill:#2563eb,stroke:#1d4ed8,color:#fff

style R3 fill:#2563eb,stroke:#1d4ed8,color:#fff

style C fill:#059669,stroke:#047857,color:#fffKey points:

- Redis Cluster hashes keys into 16,384 slots distributed across masters

- Each master has a replica for failover

- Multi-key operations (MGET, pipelines) only work if all keys are on the same slot

- Use hash tags to force related keys to the same slot:

{user:42}:profileand{user:42}:orders→ both hash onuser:42

// Hash tags: everything inside {} determines the slot

const keys = {

profile: '{user:42}:profile',

orders: '{user:42}:orders',

prefs: '{user:42}:preferences'

};

// All three keys land on the same slot — MGET works

const [profile, orders, prefs] = await redis.mget(

keys.profile, keys.orders, keys.prefs

);Monitoring Your Cache

A cache you don’t monitor is a liability. Track these metrics:

// Key metrics to monitor

const metrics = {

hitRate: 'cache_hits / (cache_hits + cache_misses)', // Target: > 90%

latencyP99: 'redis_command_duration_p99', // Target: < 2ms

evictions: 'redis_evicted_keys_total', // Target: low/stable

memoryUsed: 'redis_used_memory_bytes / redis_max_memory', // Alert: > 85%

connections:'redis_connected_clients', // Alert: near max

};# Redis CLI monitoring

redis-cli INFO stats | grep -E "keyspace_hits|keyspace_misses|evicted_keys"

# Quick hit rate calculation

redis-cli INFO stats | awk -F: '

/keyspace_hits/ { hits=$2 }

/keyspace_misses/ { misses=$2 }

END { printf "Hit rate: %.1f%%\n", hits/(hits+misses)*100 }

'If your hit rate drops below 80%, investigate:

- Are TTLs too short? (keys expire before being reused)

- Is the cache too small? (evictions climbing)

- Are cache keys too specific? (each user-page-sort combo is unique)

- Is there a bug causing excessive invalidation?

The Caching Decision Checklist

Before adding a cache, ask these questions:

- Is this data read-heavy? (>10:1 read-to-write ratio) — if not, caching may hurt more than help

- Can I tolerate staleness? — if data must be real-time, caching adds complexity without benefit

- What’s the cache-miss penalty? — if the underlying query is fast (< 5ms), caching adds latency via an extra network hop

- What’s my invalidation strategy? — TTL-only? Event-driven? Manual purge?

- What happens when the cache goes down? — can the DB handle the full load?

- Am I caching the right granularity? — too fine (per-field) wastes memory; too coarse (full page) makes invalidation hard

Real-World Patterns

Facebook’s Memcached at Scale

Facebook runs the largest Memcached deployment in the world:

- Regional pools: caches replicated per datacenter, invalidated via McRouter

- Lease-based invalidation: prevents thundering herd with lease tokens

- Gutter pool: fallback cache pool that absorbs traffic when primary nodes fail

- Delete-on-write: always delete from cache on write, never update

Twitter’s Cache Architecture

Twitter caches the home timeline as a pre-computed list per user:

- Timeline cache: Redis sorted sets holding tweet IDs per user

- Fan-out on write: when a user tweets, their tweet ID is pushed into all followers’ timeline caches

- Hybrid: celebrities use fan-out on read (too many followers to push-on-write)

Netflix’s EVCache

Netflix built EVCache (a Memcached wrapper) for:

- Cross-region replication: cache writes are replicated across AWS regions

- Warm-up from replica: new nodes pull data from peers instead of cold-starting from DB

- Zone-aware routing: reads go to the same availability zone to minimize latency

Conclusion

Caching is not a single decision — it’s a layered strategy where each layer serves a different purpose:

| Layer | What to Cache | TTL Range | Invalidation |

|---|---|---|---|

| Browser | Static assets, API responses | 1min - 1yr | Fingerprinted URLs, ETags |

| CDN | Public content, images | 5min - 1day | API purge, TTL |

| Reverse Proxy | Full API responses | 1min - 15min | TTL, PURGE endpoint |

| Application (Redis) | Objects, queries, sessions | 30s - 1hr | Event-driven + TTL |

| Database | Query results, buffer pool | Auto-managed | LRU by database engine |

The rules that survive across all layers:

- Cache-aside for reads, delete-on-write for writes — the safest default

- TTL with jitter on everything — prevents coordinated expiry

- Cache the NULL — prevents penetration attacks

- Monitor hit rate obsessively — a cache below 80% hit rate is a red flag

- Plan for cache failure — your system must survive a cold cache, even if slowly

Start with a single Redis instance and cache-aside. Add layers only when monitoring shows you need them. The best caching strategy is the simplest one that meets your latency targets.