The previous articles in this series covered scaling reads and scaling writes. Both deal with request-response patterns — client asks, server answers. Real-time systems flip that model. The server pushes data to clients the moment something changes, without waiting for a request.

This is the hardest scaling problem in web architecture. A single chat message in a 10,000-member group means 10,000 pushes. A stock price tick means pushing to every connected trader. A live sports score means millions of simultaneous updates.

This article covers the patterns I’ve used to build and scale real-time systems from thousands to millions of concurrent connections.

The Architecture at a Glance

The architecture has four concerns: transport (how clients connect), routing (which server has which client), fan-out (who gets each message), and reliability (what happens when connections drop).

Choosing a Transport

The first decision: how do clients receive updates?

WebSocket — The Default Choice

WebSocket provides a persistent, bidirectional TCP connection. After an HTTP upgrade handshake, both client and server can send frames at any time. This is the right choice for most real-time applications.

// --- Server: Node.js with ws library ---

import { WebSocketServer, WebSocket } from 'ws';

import { createServer } from 'http';

const server = createServer();

const wss = new WebSocketServer({ server });

// Track connections by user

const connections = new Map<string, Set<WebSocket>>();

wss.on('connection', (ws, req) => {

const userId = authenticateFromHeaders(req.headers);

if (!userId) {

ws.close(4001, 'Unauthorized');

return;

}

// Register connection

if (!connections.has(userId)) {

connections.set(userId, new Set());

}

connections.get(userId)!.add(ws);

ws.on('message', (data) => {

const message = JSON.parse(data.toString());

handleMessage(userId, message);

});

ws.on('close', () => {

connections.get(userId)?.delete(ws);

if (connections.get(userId)?.size === 0) {

connections.delete(userId);

}

});

// Heartbeat to detect dead connections

ws.isAlive = true;

ws.on('pong', () => { ws.isAlive = true; });

});

// Ping all clients every 30s — kill dead ones

setInterval(() => {

wss.clients.forEach((ws) => {

if (!ws.isAlive) return ws.terminate();

ws.isAlive = false;

ws.ping();

});

}, 30_000);

// Push to a specific user (all their connections)

function pushToUser(userId: string, event: any): void {

const sockets = connections.get(userId);

if (!sockets) return;

const payload = JSON.stringify(event);

for (const ws of sockets) {

if (ws.readyState === WebSocket.OPEN) {

ws.send(payload);

}

}

}

server.listen(8080);// --- Client: browser ---

class RealtimeClient {

private ws: WebSocket | null = null;

private url: string;

private reconnectDelay = 1000;

private maxDelay = 30000;

private handlers = new Map<string, Function[]>();

constructor(url: string) {

this.url = url;

this.connect();

}

private connect(): void {

this.ws = new WebSocket(this.url);

this.ws.onopen = () => {

console.log('Connected');

this.reconnectDelay = 1000; // Reset backoff

};

this.ws.onmessage = (event) => {

const data = JSON.parse(event.data);

const listeners = this.handlers.get(data.type) || [];

listeners.forEach((fn) => fn(data.payload));

};

this.ws.onclose = () => {

// Exponential backoff with jitter

const jitter = Math.random() * 1000;

const delay = Math.min(

this.reconnectDelay + jitter,

this.maxDelay

);

setTimeout(() => this.connect(), delay);

this.reconnectDelay *= 2;

};

}

on(eventType: string, handler: Function): void {

if (!this.handlers.has(eventType)) {

this.handlers.set(eventType, []);

}

this.handlers.get(eventType)!.push(handler);

}

send(data: any): void {

if (this.ws?.readyState === WebSocket.OPEN) {

this.ws.send(JSON.stringify(data));

}

}

}

// Usage

const client = new RealtimeClient('wss://api.example.com/ws');

client.on('message', (msg) => renderMessage(msg));

client.on('typing', (data) => showTypingIndicator(data));Server-Sent Events — When You Only Need Push

If the client never sends data over the real-time channel (notifications, live feeds, dashboards), SSE is simpler and scales better. It uses plain HTTP, works through every proxy, and has built-in reconnection with event IDs.

// --- Server: Express SSE endpoint ---

import express from 'express';

const app = express();

const clients = new Map<string, express.Response[]>();

app.get('/events/:userId', (req, res) => {

const { userId } = req.params;

// SSE headers

res.writeHead(200, {

'Content-Type': 'text/event-stream',

'Cache-Control': 'no-cache',

'Connection': 'keep-alive',

'X-Accel-Buffering': 'no', // Disable nginx buffering

});

// Send initial connection event

res.write(`event: connected\ndata: {"status":"ok"}\n\n`);

// Register

if (!clients.has(userId)) clients.set(userId, []);

clients.get(userId)!.push(res);

// Keepalive comment every 15s (prevents proxy timeouts)

const keepalive = setInterval(() => {

res.write(': keepalive\n\n');

}, 15_000);

req.on('close', () => {

clearInterval(keepalive);

const userClients = clients.get(userId);

if (userClients) {

const idx = userClients.indexOf(res);

if (idx > -1) userClients.splice(idx, 1);

if (userClients.length === 0) clients.delete(userId);

}

});

});

// Push to user — with event ID for resume

let eventId = 0;

function pushSSE(userId: string, eventType: string, data: any): void {

const userClients = clients.get(userId);

if (!userClients) return;

eventId++;

const payload = [

`id: ${eventId}`,

`event: ${eventType}`,

`data: ${JSON.stringify(data)}`,

'',

'',

].join('\n');

for (const res of userClients) {

res.write(payload);

}

}// --- Client: native EventSource ---

const events = new EventSource('/events/user123');

// Auto-reconnects with Last-Event-ID header

events.addEventListener('message', (e) => {

const msg = JSON.parse(e.data);

renderMessage(msg);

});

events.addEventListener('notification', (e) => {

showNotification(JSON.parse(e.data));

});

events.onerror = () => {

// Browser handles reconnection automatically

console.log('SSE reconnecting...');

};Scaling Beyond One Server: The Pub/Sub Backbone

A single server can handle ~50,000-100,000 WebSocket connections. For more, you need multiple servers. The problem: Client A is connected to Server 1, but the event is produced on Server 3. How does the message reach Client A?

The answer: a pub/sub backbone that all servers subscribe to.

graph LR

subgraph "Server 1"

A[Client A]

B[Client B]

end

subgraph "Server 2"

C[Client C]

D[Client D]

end

subgraph "Pub/Sub"

R[(Redis)]

end

S[Event Source] -->|publish| R

R -->|subscribe| A

R -->|subscribe| CRedis Pub/Sub Implementation

import Redis from 'ioredis';

class PubSubBridge {

private pub: Redis;

private sub: Redis;

private localConnections: Map<string, Set<WebSocket>>;

constructor(redisUrl: string, localConnections: Map<string, Set<WebSocket>>) {

this.pub = new Redis(redisUrl);

this.sub = new Redis(redisUrl);

this.localConnections = localConnections;

}

async start(): Promise<void> {

// Subscribe to user-specific and broadcast channels

await this.sub.psubscribe('user:*', 'channel:*', 'broadcast');

this.sub.on('pmessage', (_pattern, channel, message) => {

const event = JSON.parse(message);

if (channel === 'broadcast') {

// Push to ALL local connections

this.pushToAll(event);

} else if (channel.startsWith('user:')) {

// Push to specific user if connected to THIS server

const userId = channel.replace('user:', '');

this.pushToLocal(userId, event);

} else if (channel.startsWith('channel:')) {

// Push to all local members of a channel

const channelId = channel.replace('channel:', '');

this.pushToChannelMembers(channelId, event);

}

});

}

// Publish event — reaches ALL servers via Redis

async publish(channel: string, event: any): Promise<void> {

await this.pub.publish(channel, JSON.stringify(event));

}

// Deliver to local connections only

private pushToLocal(userId: string, event: any): void {

const sockets = this.localConnections.get(userId);

if (!sockets) return;

const payload = JSON.stringify(event);

for (const ws of sockets) {

if (ws.readyState === WebSocket.OPEN) {

ws.send(payload);

}

}

}

private pushToAll(event: any): void {

const payload = JSON.stringify(event);

for (const [, sockets] of this.localConnections) {

for (const ws of sockets) {

if (ws.readyState === WebSocket.OPEN) {

ws.send(payload);

}

}

}

}

}

// Usage

const bridge = new PubSubBridge('redis://redis:6379', connections);

await bridge.start();

// When a chat message is sent

await bridge.publish('channel:general', {

type: 'message',

payload: { sender: 'alice', text: 'Hello!', ts: Date.now() },

});

// When a notification targets a specific user

await bridge.publish('user:bob', {

type: 'notification',

payload: { title: 'New follower', body: 'Alice followed you' },

});Redis Pub/Sub Limitations

Redis pub/sub is fire-and-forget — no message persistence. If a server is briefly disconnected from Redis, it misses messages. For production systems with millions of users, consider:

| Backbone | Throughput | Persistence | Best For |

|---|---|---|---|

| Redis Pub/Sub | ~500K msg/s | None | Simple real-time, < 100K connections |

| NATS | ~10M msg/s | Optional (JetStream) | High-throughput microservices |

| Kafka | ~1M msg/s | Full | Ordered, durable event streams |

| Redis Streams | ~500K msg/s | Yes (with consumer groups) | Best of both worlds |

For most applications, Redis Streams is the sweet spot — pub/sub semantics with message persistence and consumer groups for reliability.

Fan-Out: The Hard Part

Fan-out is where real-time systems break. A user posts in a group with 50,000 members. That’s 50,000 push operations triggered by a single write.

Fan-Out on Write vs Fan-Out on Read

graph TD

subgraph "Fan-Out on Write"

A1[Message arrives] --> B1[Resolve 50K members]

B1 --> C1[Push to each member's channel]

C1 --> D1["Latency: high write, instant read"]

end

subgraph "Fan-Out on Read"

A2[Message arrives] --> B2[Store in channel]

B2 --> C2[Client polls/subscribes to channel]

C2 --> D2["Latency: instant write, read fetches"]

endFan-out on write (push model): when a message arrives, immediately resolve all recipients and push to each one. This is what Twitter originally did — and it broke at Bieber-scale.

Fan-out on read (pull model): store the message once, and each client fetches from channels they’re subscribed to. Less write amplification, but higher read latency.

The hybrid approach (what actually works): fan-out on write for small groups (< 1,000 members), fan-out on read for large channels.

const FANOUT_THRESHOLD = 1000;

async function deliverMessage(channelId: string, message: any): Promise<void> {

const memberCount = await redis.scard(`channel:${channelId}:members`);

if (memberCount <= FANOUT_THRESHOLD) {

// Small channel: push to each member immediately

await fanOutOnWrite(channelId, message);

} else {

// Large channel: store and let clients pull

await fanOutOnRead(channelId, message);

}

}

async function fanOutOnWrite(channelId: string, message: any): Promise<void> {

// Store message

await redis.xadd(

`messages:${channelId}`,

'*',

'data', JSON.stringify(message)

);

// Get all member IDs

const members = await redis.smembers(`channel:${channelId}:members`);

// Push to each member's personal channel

// Batch into chunks to avoid overwhelming Redis

const BATCH = 500;

for (let i = 0; i < members.length; i += BATCH) {

const batch = members.slice(i, i + BATCH);

const pipeline = redis.pipeline();

for (const userId of batch) {

pipeline.publish(`user:${userId}`, JSON.stringify({

type: 'message',

channel: channelId,

payload: message,

}));

}

await pipeline.exec();

}

}

async function fanOutOnRead(channelId: string, message: any): Promise<void> {

// Just store the message — clients subscribe to the channel directly

await redis.xadd(

`messages:${channelId}`,

'*',

'data', JSON.stringify(message)

);

// Publish a lightweight notification to the channel

await pubsub.publish(`channel:${channelId}`, JSON.stringify({

type: 'new_message',

channel: channelId,

messageId: message.id,

// Don't include full payload — client fetches it

}));

}Presence: Who’s Online?

Presence tracking answers “who’s online right now?” — essential for chat, collaboration, and gaming.

Redis Sorted Set for Presence

class PresenceService {

private redis: Redis;

private readonly TTL = 60; // seconds

constructor(redis: Redis) {

this.redis = redis;

}

// Called on connect and every heartbeat interval

async setOnline(userId: string): Promise<void> {

const now = Date.now();

await this.redis.zadd('presence:online', now, userId);

}

// Called on disconnect

async setOffline(userId: string): Promise<void> {

await this.redis.zrem('presence:online', userId);

// Notify subscribers

await this.redis.publish('presence', JSON.stringify({

userId,

status: 'offline',

timestamp: Date.now(),

}));

}

// Get all online users (with stale cleanup)

async getOnlineUsers(): Promise<string[]> {

const cutoff = Date.now() - this.TTL * 1000;

// Remove stale entries (no heartbeat for TTL seconds)

await this.redis.zremrangebyscore('presence:online', 0, cutoff);

// Return remaining

return this.redis.zrange('presence:online', 0, -1);

}

// Check specific users

async areOnline(userIds: string[]): Promise<Map<string, boolean>> {

const cutoff = Date.now() - this.TTL * 1000;

const result = new Map<string, boolean>();

const pipeline = this.redis.pipeline();

for (const id of userIds) {

pipeline.zscore('presence:online', id);

}

const scores = await pipeline.exec();

userIds.forEach((id, i) => {

const score = scores![i][1] as number | null;

result.set(id, score !== null && score > cutoff);

});

return result;

}

// Get online count for a channel

async channelOnlineCount(channelId: string): Promise<number> {

const members = await this.redis.smembers(

`channel:${channelId}:members`

);

const statuses = await this.areOnline(members);

return [...statuses.values()].filter(Boolean).length;

}

}

// Heartbeat on the WebSocket server

setInterval(async () => {

for (const [userId] of connections) {

await presence.setOnline(userId);

}

}, 30_000);Backpressure: When Clients Can’t Keep Up

A slow client on a 3G connection can’t consume messages as fast as they arrive. Without backpressure, the server buffers messages in memory until it OOMs.

Per-Connection Buffer with Overflow

class BackpressureManager {

private buffers = new Map<WebSocket, any[]>();

private readonly MAX_BUFFER = 100;

private readonly MAX_BEHIND_MS = 30_000;

send(ws: WebSocket, event: any): void {

// Check if socket buffer is draining

if (ws.bufferedAmount > 65536) {

// Socket is backed up — buffer in application

this.bufferMessage(ws, event);

return;

}

// Flush any buffered messages first

this.flushBuffer(ws);

// Send current message

ws.send(JSON.stringify(event));

}

private bufferMessage(ws: WebSocket, event: any): void {

if (!this.buffers.has(ws)) {

this.buffers.set(ws, []);

}

const buffer = this.buffers.get(ws)!;

if (buffer.length >= this.MAX_BUFFER) {

// Drop oldest messages — client is too far behind

buffer.shift();

// Mark that messages were dropped

event._dropped = true;

}

buffer.push(event);

}

private flushBuffer(ws: WebSocket): void {

const buffer = this.buffers.get(ws);

if (!buffer?.length) return;

while (buffer.length > 0 && ws.bufferedAmount < 65536) {

const msg = buffer.shift()!;

ws.send(JSON.stringify(msg));

}

if (buffer.length === 0) {

this.buffers.delete(ws);

}

}

}Client-Side: Detecting Gaps and Catching Up

When the server drops messages due to backpressure, the client needs to know and recover.

// Client-side catch-up on reconnect or gap detection

class RealtimeClientWithCatchup {

private lastEventId: string | null = null;

onMessage(event: any): void {

if (event._dropped) {

// Server dropped messages — fetch the gap

this.catchUp();

return;

}

this.lastEventId = event.id;

this.render(event);

}

async catchUp(): Promise<void> {

// Fetch missed messages from REST API

const response = await fetch(

`/api/messages?after=${this.lastEventId}&limit=100`

);

const missed = await response.json();

for (const msg of missed) {

this.render(msg);

this.lastEventId = msg.id;

}

}

}Message Ordering and Delivery Guarantees

Real-time systems can deliver messages out of order across multiple servers. A chat where replies arrive before the original message is unusable.

Logical Clocks for Ordering

// Server-side: assign monotonic IDs per channel

class MessageOrderer {

private redis: Redis;

async assignId(channelId: string): Promise<string> {

// Atomic increment — guaranteed unique and ordered per channel

const seq = await this.redis.incr(`seq:${channelId}`);

// Combine with timestamp for global rough ordering

const ts = Date.now();

return `${ts}-${seq}`;

}

}

// Client-side: reorder buffer

class OrderedMessageBuffer {

private buffer: Map<string, any> = new Map();

private lastSeq = 0;

receive(message: any): any[] {

const seq = parseInt(message.id.split('-')[1]);

this.buffer.set(message.id, message);

// Flush consecutive messages

const flushed: any[] = [];

while (this.buffer.has(this.nextExpectedId())) {

const id = this.nextExpectedId();

flushed.push(this.buffer.get(id));

this.buffer.delete(id);

this.lastSeq++;

}

return flushed;

}

private nextExpectedId(): string {

// Simplified — in practice, match the full ID format

return `*-${this.lastSeq + 1}`;

}

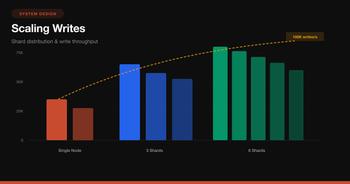

}Scaling Milestones: What Breaks When

Here’s what I’ve seen break at each order of magnitude:

10K connections

├── Single server, no pub/sub needed

├── In-memory connection map

└── Problem: nothing yet

100K connections

├── Multiple server nodes required

├── Need pub/sub backbone (Redis)

├── Need sticky sessions or connection registry

└── Problem: Redis pub/sub memory under fan-out spikes

500K connections

├── Redis Streams > Redis Pub/Sub

├── Fan-out batching critical

├── Need presence service (separate from main DB)

└── Problem: Thundering herd on reconnect after deploy

1M+ connections

├── Multiple pub/sub shards

├── Hybrid fan-out (write for small, read for large)

├── Connection-level backpressure mandatory

├── Need message catch-up service

└── Problem: Operating system file descriptor limits,

TCP port exhaustion, GC pausesOS-Level Tuning for High Connection Counts

# /etc/sysctl.conf — for servers handling 100K+ connections

# Increase max file descriptors

fs.file-max = 2097152

# Increase socket buffer sizes

net.core.somaxconn = 65535

net.core.netdev_max_backlog = 65535

# Increase ephemeral port range

net.ipv4.ip_local_port_range = 1024 65535

# Reuse TIME_WAIT sockets

net.ipv4.tcp_tw_reuse = 1

# Faster TCP keepalive detection

net.ipv4.tcp_keepalive_time = 60

net.ipv4.tcp_keepalive_intvl = 10

net.ipv4.tcp_keepalive_probes = 6# Per-process limits — /etc/security/limits.conf

* soft nofile 1048576

* hard nofile 1048576Putting It All Together: The Decision Framework

| Question | Answer | Pattern |

|---|---|---|

| Client sends data too? | Yes | WebSocket |

| Server push only? | Yes | SSE |

| < 50K connections? | Single server | In-memory connection map |

| 50K-500K connections? | Multi-server | Redis Pub/Sub backbone |

| > 500K connections? | Large scale | Redis Streams + sharded pub/sub |

| Small groups (< 1K)? | Fan-out on write | Push to each member |

| Large channels (> 1K)? | Fan-out on read | Store + notify, client fetches |

| Need “who’s online”? | Presence | Redis Sorted Set + heartbeat |

| Slow clients? | Backpressure | Per-connection buffer + drop policy |

| Messages must be ordered? | Ordering | Channel-level sequence numbers |

| Clients reconnect often? | Catch-up | Message store + Last-Event-ID |

The Golden Rule

Real-time at scale is not about picking the fastest transport — it’s about handling the fan-out multiplication gracefully. One event becoming 100,000 pushes is where systems buckle. Batch your fan-out, apply backpressure at every layer, and always have a catch-up path for clients that miss messages. The WebSocket connection is the easy part. The pub/sub fan-out is where you’ll spend 80% of your engineering time.

Further Reading

- WebSocket Protocol RFC 6455

- Server-Sent Events specification

- How Discord Stores Billions of Messages — real-world scale

- Scaling WebSockets with Redis — Redis pub/sub patterns

- NATS.io — high-performance messaging for microservices